|

Otherwise, it creates a new graph, adds it to the container, and then launches it (Figure 1). If the key is found, the code launches the corresponding executable CUDA graph. Wherever function tight_loop occurs, you wrap it with code that fills the key and looks it up in your container. define the container (map) containing (key,value) pairs You define it and the container to be used in the source code such that its scope includes every invocation of function tight_loop. This triplet is the key used to distinguish graphs. Assume that, in this case, the variables first, params.size, and delta uniquely define tight_loop. When you encounter a parameter set already in the container, launch the corresponding CUDA graph. Whenever you encounter a new parameter set uniquely defining function tight_loop, add it to the container, along with its corresponding executable graph. The first approach introduces a container from the C++ Standard Template Library to store parameter sets. However, assuming that the same function parameter sets are encountered numerous times, you can handle this situation in at least a couple of different ways: Saving and recognizing graphs or updating graphs.

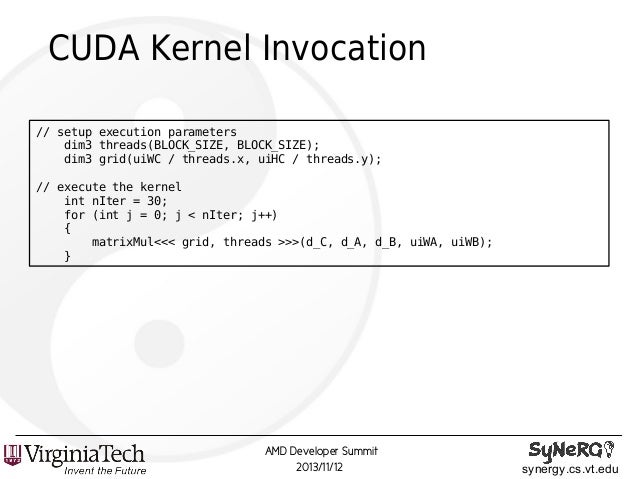

Obviously, if the parameters of the function change upon successive invocations, the CUDA graph representing the GPU work inside should change as well. In the actual application, it looked like the following code: void tight_loop(int first_step, MyStruct params, int delta, dim3 grid_dim, dim3 block_dim, cudaStream_t stream)įor (int step = first_step step >= 0 -step, params.size -= delta) It merely records all such operations and stores them in a data structure.įocus on the function launching the kernels. The call to the tight_loop function does not actually execute any kernel launches or other CUDA operations. turn this info into executable CUDA Graph “instance”ĬudaGraphInstantiate(&instance, graph, NULL, NULL, 0) ĬudaGraphLaunch(instance, stream) //launch the executable graph aggregate all info about the stream capture into “graph” Tight_loop() //function containing many small kernels if (!captured)ĬudaStreamBeginCapture(stream, cudaStreamCaptureModeGlobal) Next, wrap any actual invocation of the function with code to create its corresponding CUDA graph, if it does not already exist, and subsequently launch the graph. Place the declaration and initialization of this switch in the source code such that its scope includes every invocation of function tight_loop. You must introduce a switch-the Boolean captured, in this case-to signal whether a graph has already been created. If this function is executed identically each time it is encountered, it is easy to turn it into a CUDA graph using stream capture. ContextĬonsider an application with a function that launches many short-running kernels, for example: tight_loop() //function containing many small kernels In this post, I describe some scenarios for improving performance of real-world applications by employing CUDA graphs, some including graph update functionality.

Coverage and efficiency of such update operations have since improved markedly. They do incur some overhead when they are created, so their greatest benefit comes from reusing them many times.Īt their introduction in toolkit version 10, CUDA graphs could already be updated to reflect some minor changes in their instantiations. Graphs work because they combine arbitrary numbers of asynchronous CUDA API calls, including kernel launches, into a single operation that requires only a single launch.

One way of reducing that overhead is offered by CUDA Graphs. When those kernels are many and of short duration, launch overhead sometimes becomes a problem. In CUDA terms, this is known as launching kernels. Many workloads can be sped up greatly by offloading compute-intensive parts onto GPUs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed